Frame-synchronous Blind Audio Watermarking for Tamper Proofing and Self-Recovery

DOI:

https://doi.org/10.46604/aiti.2020.4138Keywords:

Blind audio watermarking, lifting wavelet transform, 2N-ary adaptive quantization modulation, rational dither modulation, tamper proofing, self-recoveryAbstract

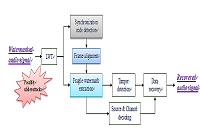

This paper presents a lifting wavelet transform (LWT)-based blind audio watermarking scheme designed for tampering detection and self-recovery. Following 3-level LWT decomposition of a host audio, the coefficients in selected subbands are first partitioned into frames for watermarking. To suit different purposes of the watermarking applications, binary information is packed into two groups: frame-related data are embedded in the approximation subband using rational dither modulation; the source-channel coded bit sequence of the host audio is hidden inside the 2ndand 3rd-detail subbands using 2N-ary adaptive quantization index modulation. The frame-related data consists of a synchronization code used for frame alignment and a composite message gathered from four adjacent frames for content authentication. To endow the proposed watermarking scheme with a self-recovering capability, we resort to hashing comparison to identify tampered frames and adopt a Reed–Solomon code to correct symbol errors. The experiment results indicate that the proposed watermarking scheme can accurately locate and recover the tampered regions of the audio signal. The incorporation of the frame synchronization mechanism enables the proposed scheme to resist against cropping and replacement attacks, all of which were unsolvable by previous watermarking schemes. Furthermore, as revealed by the perceptual evaluation of audio quality measures, the quality degradation caused by watermark embedding is merely minor. With all the aforementioned merits, the proposed scheme can find various applications for ownership protection and content authentication.

References

N. Cvejic and T. Seppänen, Digital audio watermarking techniques and technologies: applications and benchmarks. Hershey: Information Science Reference, IGI Global, 2008.

X. He, Watermarking in audio: key techniques and technologies, Youngstown, N.Y.: Cambria Press, 2008.

M. Steinebach and J. Dittmann, “Watermarking-Based Digital Audio Data Authentication,” EURASIP Journal on Advances in Signal Processing, vol. 2003, no. 10, pp. 1001-1015, 2003.

M. Q. Fan, P. P. Liu, H. X. Wang, and H. J. Li, “A semi-fragile watermarking scheme for authenticating audio signal based on dual-tree complex wavelet transform and discrete cosine transform,” International Journal of Computer Mathematics, vol. 90, no. 12, pp. 2588-2602, 2013.

Ghobadi, A. Boroujerdizadeh, A. H. Yaribakht, and R. Karimi, “Blind audio watermarking for tamper detection based on LSB,” Proc. 2013 15th International Conference on Advanced Communications Technology (ICACT), IEEE Press, January 2013, pp. 1077-1082.

N. N. Hurrah, S. A. Parah, N. A. Loan, J. A. Sheikh, M. Elhoseny, and K. Muhammad, “Dual watermarking framework for privacy protection and content authentication of multimedia,” Future Generation Computer Systems, vol. 94, pp. 654-673, 2019.

H. He, F. Chen, H. Tai, T. Kalker, and J. Zhang, “Performance analysis of a block-neighborhood-based self-recovery fragile watermarking scheme,” IEEE Transactions on Information Forensics and Security, vol. 7, no.1, pp. 185-196, 2011.

Q. Han, L. Han, E. Wang, and J. Yang, “Dual Watermarking for Image Tamper Detection and Self-Recovery,” 2013 9th International Conference on Intelligent Information Hiding and Multimedia Signal Processing, October 2013, pp. 33-36.

X. Zhang, Z. Qian, Y. Ren, and G. Feng, “Watermarking with flexible self-recovery quality based on compressive sensing and compositive reconstruction,” IEEE Transactions on Information Forensics and Security, vol. 6, no. 4, pp. 1223-1232, 2011.

W. L. Tai and Z. J. Liao, “Image self-recovery with watermark self-embedding,” Signal Processing: Image Communication, vol. 65, pp. 11-25, July 2018.

S. Sarreshtedari, M. A. Akhaee, and A. Abbasfar, “A watermarking method for digital speech self-recovery,” IEEE/ACM Transactions on Audio, Speech, and Language Processing (TASLP), vol. 23, no.11, pp. 1917-1925, 2015.

W. Lu, Z. Chen, L. Li, X. Cao, J. Wei, N. Xiong, et al., “Watermarking Based on Compressive Sensing for Digital Speech Detection and Recovery (†),” Sensors, vol. 18, no. 7, pp. 2390, 2018.

S. Li, Z. Song, W. Lu, D. Sun, and J. Wei, “Parameterization of LSB in Self-Recovery Speech Watermarking Framework in Big Data Mining,” Security and Communication Networks, 2017.

F. Chen, H. He, and H. Wang, “A fragile watermarking scheme for audio detection and recovery,” 2008 Congress on Image and Signal Processing, vol. 5, pp. 135-138, 2008.

Menendez-Ortiz, C. Feregrino-Uribe, J. J. Garcia-Hernandez, and Z. J. Guzman-Zavaleta, “Self-recovery scheme for audio restoration after a content replacement attack,” Multimedia Tools and Applications, vol. 76, no. 12, pp. 14197-14224, June 2017.

J. J. Gomez-Ricardez and J. J. Garcia-Hernandez, “An audio self-recovery scheme that is robust to discordant size content replacement attack,” 2018 IEEE 61st International Midwest Symposium on Circuits and Systems (MWSCAS), 2018, pp. 825-828.

Chen and G. W. Wornell, “Quantization index modulation: A class of provably good methods for digital watermarking and information embedding,” IEEE Trans. Information Theory, vol. 47, no. 4, pp. 1423-1443, 2001.

W. Sweldens, “The lifting scheme: A custom-design construction of biorthogonal wavelets,” Applied and computational harmonic analysis, vol. 3, no. 2, pp. 186-200, 1996.

Daubechies, Ten lectures on wavelets. Philadelphia, 1992.

H. T. Hu and L. Y. Hsu, “A DWT-based rational dither modulation scheme for effective blind audio watermarking,” Circuits, Systems, and Signal Processing, vol. 35, no. 2, pp. 553-572, 2016.

H. T. Hu and L. Y. Hsu, “Supplementary schemes to enhance the performance of DWT-RDM-based blind audio watermarking,” Circuits, Systems, and Signal Processing, vol. 36, no. 5, pp. 1890-1911, 2017.

X. He and M. S. Scordilis, “An enhanced psychoacoustic model based on the discrete wavelet packet transform,” Journal of the Franklin Institute, vol. 343, no. 7, pp. 738-755, 2006.

T. Painter and A. Spanias, “Perceptual coding of digital audio,” Proc. IEEE, vol. 88, no. 4, pp. 451-515, 2000.

H. Traunmüller, “Analytical expressions for the tonotopic sensory scale,” The Journal of the Acoustical Society of America, vol. 88, no. 1, pp. 97-100, 1990.

H. T. Hu, L. Y. Hsu, and H. H. Chou, “Variable-dimensional vector modulation for perceptual-based DWT blind audio watermarking with adjustable payload capacity,” Digital Signal Processing, vol. 31, pp. 115-123, 2014.

H. T. Hu and L. Y. Hsu, “Robust, transparent and high-capacity audio watermarking in DCT domain,” Signal Processing, vol. 109, pp. 226-235, 2015.

H. Hu and T. Lee, “High-Performance Self-Synchronous Blind Audio Watermarking in a Unified FFT Framework,” IEEE Access, vol. 7, pp. 19063-19076, 2019.

S. Lin and D. J. Costello, Error Control Coding, Second Edition: Prentice-Hall, Inc., 2004.

G. Forney, Jr., “On decoding BCH codes,” IEEE Trans. Information Theory, vol. 11, no. 4, pp. 549-557, 1965.

B. den Boer and A. Bosselaers, “Collisions for the compression function of MD5,” Workshop on the Theory and Application of Cyptographic, Berlin, Heidelberg, pp. 293-304, 1994.

ITU-R Recommendation BS.1387, “Method for objective measurements of perceived audio quality,” December 1998.

P. Kabal, “An examination and interpretation of ITU-R BS.1387: Perceptual evaluation of audio quality,” TSP Lab Technical Report, Dept. Electrical & Computer Engineering, McGill University, 2002.

Published

How to Cite

Issue

Section

License

Submission of a manuscript implies: that the work described has not been published before that it is not under consideration for publication elsewhere; that if and when the manuscript is accepted for publication. Authors can retain copyright in their articles with no restrictions. is accepted for publication. Authors can retain copyright of their article with no restrictions.

Since Jan. 01, 2019, AITI will publish new articles with Creative Commons Attribution Non-Commercial License, under The Creative Commons Attribution Non-Commercial 4.0 International (CC BY-NC 4.0) License.

The Creative Commons Attribution Non-Commercial (CC-BY-NC) License permits use, distribution and reproduction in any medium, provided the original work is properly cited and is not used for commercial purposes.