Tea Verification Using Triplet Loss Convolutional Network

DOI:

https://doi.org/10.46604/aiti.2021.6939Keywords:

convolutional neural network, tea image classification, tea image verification, triplet lossAbstract

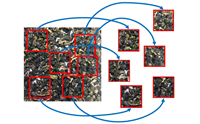

To solve tea image classification problems, this study focuses on triplet loss convolutional neural network to classify six high-mountain oolong tea classes. In the experiment, instead of using traditional deep learning training approach for local feature of tea images, an innovative image verification approach is proposed to learn the global feature of tea images by integrating the distributed tea leaves’ features of all tea sub-images and using a majority voting mechanism to do classification. The results show that the proposed approach can work for small sample size dataset and have higher accuracy than normal transfer learning approach. The average accuracy of the proposed approach achieves 99.54%.

References

V. Voora, S. Bermúdez, and C. Larrea, “Global Market Report: Tea,” https://www.iisd.org/publications/global-market-report-tea, December 16, 2019.

W. Pongsuwan, E. Fukusaki, T. Bamba, T. Yonetani, T. Yamahara, and A. Kobayashi, “Prediction of Japanese Green Tea Ranking by Gas Chromatography/Mass Spectrometry-Based Hydrophilic Metabolite Fingerprinting,” Journal of Agricultural and Food Chemistry, vol. 55, no. 2, pp. 231-236, January 2007.

W. Shao, C. Powell, and M. N. Clifford, “The Analysis by HPLC of Green, Black and Pu’er Teas Produced in Yunnan,” Journal of the Science of Food and Agriculture, vol. 69, no. 4, pp. 535-540, December 1995.

Y. D. Zhang, K. Muhammad, and C. Tang, “Twelve-Layer Deep Convolutional Neural Network with Stochastic Pooling for Tea Category Classification on GPU Platform,” Multimedia Tools and Applications, vol. 77, no. 17, pp. 22821-22839, September 2018.

Y. Chen, “Identification of Tea Leaf Based on Histogram Equalization, Gray-Level Co-Occurrence Matrix and Support Vector Machine Algorithm,” International Conference on Multimedia Technology and Enhanced Learning, April 2020, pp. 3-16.

Y. Zhang, X. Yang, C. Cattani, R. V. Rao, S. Wang, and P. Phillips, “Tea Category Identification Using a Novel Fractional Fourier Entropy and Jaya Algorithm,” Entropy, vol. 18, no. 3, 77, March 2016.

F. Chollet, “Xception: Deep Learning with Depthwise Separable Convolutions,” IEEE Conference on Computer Vision and Pattern Recognition, July 2017, pp. 1251-1258.

K. Simonyan and A. Zisserman, “Very Deep Convolutional Networks for Large-Scale Image Recognition,” https://arxiv.org/pdf/1409.1556.pdf, April 10, 2015.

C. Szegedy, V. Vanhoucke, S. Ioffe, J. Shlens, and Z. Wojna, “Rethinking the Inception Architecture for Computer Vision,” IEEE Conference on Computer Vision and Pattern Recognition, June 2016, pp. 2818-2826.

C. Szegedy, S. Ioffe, V. Vanhoucke, and A. Alemi, “Inception-v4, Inception-ResNet and the Impact of Residual Connections on Learning,” https://arxiv.org/pdf/1602.07261.pdf, August 23, 2016.

R. R. Selvaraju, M. Cogswell, A. Das, R. Vedantam, D. Parikh, and D. Batra, “Grad-CAM: Visual Explanations from Deep Networks via Gradient-Based Localization,” IEEE International Conference on Computer Vision, October 2017, pp. 618-626.

D. Smilkov, N. Thorat, B. Kim, F. Viégas, and M. Wattenberg, “SmoothGrad: Removing Noise by Adding Noise,” https://arxiv.org/pdf/1706.03825.pdf, June 12, 2017.

L. Zhang, Advances in Forest Management under Global Change, London: IntechOpen, 2020.

X. Li, P. Nie, Z. J. Qiu, and Y. He, “Using Wavelet Transform and Multi-Class Least Square Support Vector Machine in Multi-Spectral Imaging Classification of Chinese Famous Tea,” Expert Systems with Applications, vol. 38, no. 9, pp. 11149-11159, September 2011.

Q. Chen, J. Zhao, and J. Cai, “Identification of Tea Varieties Using Computer Vision,” Transactions of the Asabe, vol. 51, no. 2, pp. 623-628, March-April 2008.

S. Wang, X. Yang, Y. Zhang, P. Phillips, J. Yang, and T. F. Yuan, “Identification of Green, Oolong and Black Teas in China via Wavelet Packet Entropy and Fuzzy Support Vector Machine,” Entropy, vol. 17, no. 10, pp. 6663-6682, October 2015.

F. Schroff, D. Kalenichenko, and J. Philbin, “FaceNet: A Unified Embedding for Face Recognition and Clustering,” IEEE Conference on Computer Vision and Pattern Recognition, June 2015, pp. 815-823.

Published

How to Cite

Issue

Section

License

Submission of a manuscript implies: that the work described has not been published before that it is not under consideration for publication elsewhere; that if and when the manuscript is accepted for publication. Authors can retain copyright in their articles with no restrictions. is accepted for publication. Authors can retain copyright of their article with no restrictions.

Since Jan. 01, 2019, AITI will publish new articles with Creative Commons Attribution Non-Commercial License, under The Creative Commons Attribution Non-Commercial 4.0 International (CC BY-NC 4.0) License.

The Creative Commons Attribution Non-Commercial (CC-BY-NC) License permits use, distribution and reproduction in any medium, provided the original work is properly cited and is not used for commercial purposes.