Personalized Clothing Prediction Algorithm Based on Multi-modal Feature Fusion

DOI:

https://doi.org/10.46604/ijeti.2024.13394Keywords:

fashion consumers, image, text data, personalized, multi-modal fusionAbstract

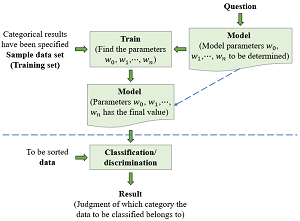

With the popularization of information technology and the improvement of material living standards, fashion consumers are faced with the daunting challenge of making informed choices from massive amounts of data. This study aims to propose deep learning technology and sales data to analyze the personalized preference characteristics of fashion consumers and predict fashion clothing categories, thus empowering consumers to make well-informed decisions. The Visuelle’s dataset includes 5,355 apparel products and 45 MB of sales data, and it encompasses image data, text attributes, and time series data. The paper proposes a novel 1DCNN-2DCNN deep convolutional neural network model for the multi-modal fusion of clothing images and sales text data. The experimental findings exhibit the remarkable performance of the proposed model, with accuracy, recall, F1 score, macro average, and weighted average metrics achieving 99.59%, 99.60%, 98.01%, 98.04%, and 98.00%, respectively. Analysis of four hybrid models highlights the superiority of this model in addressing personalized preferences.

References

X. Wu and L. Zhu, “Application of Product Form Recognition Combined with Deep Learning Algorithm,” Computer-Aided Design & Applications, vol. 21, no. S15, pp. 54-68, 2024.

S. Yuan, L. Zhong, and L. Li, “WhatFits- Deep Learning for Clothing Collocation,” 7th International Conference on Behavioural and Social Computing, pp. 1-4, November 2020.

Z. He, Y. Li, X. Shi, P. Li, and W. Huang, “Multi-Deep Features Fusion Algorithm for Clothing Image Recognition,” 8th International Conference on Digital Home, pp. 104-109, September 2020.

Z. W. Wang, Y. Y. Pu, X. Wang, Z. P. Zhao, D. Xu, and W. H. Qian, “Accurate Retrieval of Multi-Scale Clothing Images Based on Multi-Feature Fusion,” Chinese Journal of Computers, vol. 43, no. 4, pp. 740-754, 2020. (In Chinese)

S. S. Islam, E. K. Dey, M. N. A. Tawhid, and B. M. M. Hossain, “A CNN Based Approach for Garments Texture Design Classification,” Advances in Technology Innovation, vol. 2, no. 4, pp. 119-125, October 2017.

J. Zhao, “The Evolution of Chinese Traditional Ethnic Clothing Design Style Based on Interactive Dichroism Algorithm,” Applied Mathematics and Nonlinear Sciences, vol. 9, no. 1, pp. 1-15, January 2024.

X. Han, “Research on Clothing Personalized Recommendation Algorithm Based on Improved Collaborative Filtering Algorithm,” 3rd International Conference on Internet of Things and Smart City (IoTSC), vol. 12708, article no. 127080C, June 2023.

P. Jing, K. Cui, W. Guan, L. Nie, and Y. Su, “Category-Aware Multimodal Attention Network for Fashion Compatibility Modeling,” IEEE Transactions on Multimedia, vol. 25, pp. 9120-9131, 2023.

L. Liu, H. Zhang, Q. Li, J. Ma, and Z. Zhang, “Collocated Clothing Synthesis with GANs Aided by Textual Information: A Multi-Modal Framework,” ACM Transactions on Multimedia Computing, Communications, and Applications, vol. 20, no. 1, article no. 26, January 2024.

M. S. Amin, C. Wang, and S. Jabeen, “Fashion Sub-Categories and Attributes Prediction Model Using Deep Learning,” The Visual Computer, vol. 39, no. 9, pp. 3851-3864, September 2023.

H. Zhang, W. Huang, L. Liu, and T. W. S. Chow, “Learning to Match Clothing from Textual Feature-Based Compatible Relationships,” IEEE Transactions on Industrial Informatics, vol. 16, no. 11, pp. 6750-6759, November 2020.

Y. Chen, Z. Zhou, G. Lin, X. Chen, and Z. Su, “Personalized Outfit Compatibility Prediction Based on Regional Attention,” 9th International Conference on Digital Home, pp. 75-80, October 2022.

D. Kim, K. Saito, S. Mishra, S. Sclaroff, K. Saenko, and B. A. Plummer, “Self-Supervised Visual Attribute Learning for Fashion Compatibility,” https://arxiv.org/pdf/2008.00348.pdf, August 12, 2021.

S. Lu, X. Zhu, Y. Wu, X. Wan, and F. Gao, “Outfit Compatibility Prediction with Multi-Layered Feature FusionNetwork,” Pattern Recognition Letters, vol. 147, pp. 150-156, July 2021.

J. Shi, X. Song, Z. Liu, and L. Nie, “Fashion Graph-Enhanced Personalized Complementary Clothing Recommendation,” Journal of Cyber Security, vol. 6, no. 5, pp. 181-198, 2021. (In Chinese)

Y. Wang, L. Liu, X. Fu, and L. Liu, “MCCP: Multi-Modal Fashion Compatibility and Conditional Preference Model for Personalized Clothing Recommendation,” Multimedia Tools and Applications, vol. 83, no. 4, pp. 9621-9645, January 2024.

V. Ekambaram, K. Manglik, S. Mukherjee, S. S. K. Sajja, S. Dwivedi, and V. Raykar, “Attention Based Multi-Modal New Product Sales Time-Series Forecasting,” Proceedings of the 26th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, pp. 3110-3118, August 2020.

G. Skenderi, C. Joppi, M. Denitto, B. Scarpa, and M. Cristani, “The Multi-Modal Universe of Fast-Fashion: The Visuelle 2.0 Benchmark,” https://arxiv.org/pdf/2204.06972.pdf, April 14, 2022.

J. Yang, C. Li, P. Zhang, B. Xiao, C. Liu, L. Yuan, et al., “Unified Contrastive Learning in Image-Text-Label Space,” https://arxiv.org/pdf/2204.03610.pdf, April 07, 2022.

M. Wu, G. Zhang, and C. Jin, “Time Series Prediction Model Based on Multimodal Information Fusion,” Journal of Computer Applications, vol. 42, no. 8, pp. 2326-2332, August 2022. (In Chinese)

Published

How to Cite

Issue

Section

License

Copyright (c) 2024 Rong Liu, Annie Anak Joseph, Miaomiao Xin, Hongyan Zang, Wanzhen Wang, Shengqun Zhang

This work is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License.

Copyright Notice

Submission of a manuscript implies: that the work described has not been published before that it is not under consideration for publication elsewhere; that if and when the manuscript is accepted for publication. Authors can retain copyright in their articles with no restrictions. Also, author can post the final, peer-reviewed manuscript version (postprint) to any repository or website.

Since Jan. 01, 2019, IJETI will publish new articles with Creative Commons Attribution Non-Commercial License, under Creative Commons Attribution Non-Commercial 4.0 International (CC BY-NC 4.0) License.

The Creative Commons Attribution Non-Commercial (CC-BY-NC) License permits use, distribution and reproduction in any medium, provided the original work is properly cited and is not used for commercial purposes.

.jpg)