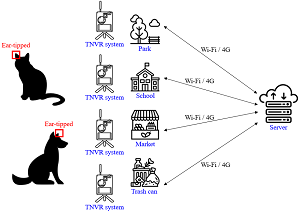

Intelligent TNVR Ear-Tag Recognition and Monitoring System for Stray Animal Management

DOI:

https://doi.org/10.46604/ijeti.2026.15640Keywords:

TNVR, stray animal, YOLOv8, image recognitionAbstract

This study aims to enhance stray animal management by improving the efficiency and sustainability of the trap-neuter-vaccinate-return (TNVR) process. An intelligent monitoring system integrating image recognition and radar sensing is proposed for real-time detection and identification. The system utilizes a domain-specific ear-tag recognition model based on OpenCV preprocessing and YOLOv8, achieving an accuracy of 91% under various environmental conditions. Captured data are automatically uploaded via 4G to a centralized server, supporting continuous monitoring and instant alerts. Designed for high-density urban settings, the system mitigates manual workload and enhances decision-making efficiency, contributing to sustainable and humane stray animal control. Although the proposed system demonstrates high detection accuracy and robust performance under real-world conditions, the current evaluation is conducted on a moderate-scale dataset; future work will focus on large-scale deployment and cross-context validation to further examine system generalizability.

References

J. K. Levy, D. W. Gale, and L. A. Gale, “Evaluation of the Effect of a Long-Term Trap-Neuter-Return and Adoption Program on a Free-Roaming Cat Population,” Journal of the American Veterinary Medical Association, vol. 222, no. 1, pp. 42-46, 2003.

S. Zito, G. Aguilar, S. Vigeant, and A. Dale, “Assessment of a Targeted Trap-Neuter-Return Pilot Study in Auckland, New Zealand,” Animals, vol. 8, no. 5, article no. 73, 2018.

K. L. Hughes and M. R. Slater, “Integrated Return-to-Field and Targeted Trap-Neuter-Vaccinate-Return Programs Result in Reductions of Feline Intake and Euthanasia at Six Municipal Animal Shelters,” Frontiers in Veterinary Science, vol. 6, article no. 77, 2019.

A. M. Johnston and D. S. Edwards, “Welfare Implications of Identification of Cattle by Ear Tags,” Veterinary Record, vol. 138, no. 25, pp. 612-614, 1996.

A. Krizhevsky, I. Sutskever, and G. E. Hinton, “ImageNet Classification with Deep Convolutional Neural Networks,” Communications of the ACM, vol. 60, no. 6, pp. 84-90, 2017.

Visual Geometry Group, “The Oxford-IIIT Pet Dataset,” https://www.robots.ox.ac.uk/~vgg/data/pets/, 2025.

J. LeBien, M. Zhong, M. Campos-Cerqueira, J. P. Velev, R. Dodhia, J. L. Ferres, et al., “A Pipeline for Identification of Bird and Frog Species in Tropical Soundscape Recordings Using a Convolutional Neural Network,” Ecological Informatics, vol. 59, article no. 101113, 2020.

A. Bochkovskiy, C. Y. Wang, and H. Y. M. Liao, “YOLOv4: Optimal Speed and Accuracy of Object Detection,” https://doi.org/10.48550/arXiv.2004.10934, 2020.

U. P. S. Lundquist, S. Afridi, C. Berthelot, N. Ngoc Dat, K. Hlebowicz, E. Iannino, et al., “WildDrone: Autonomous Drone Technology for Monitoring Wildlife Populations,” Frontiers in Robotics and AI, vol. 12, article no. 1695319, 2025.

E. Williams, A. Carter, and J. Boyd, “Kinetics and Kinematics of Working Trials Dogs: The Impact of Long Jump Length on Peak Vertical Landing Force and Joint Angulation,” Animals, vol. 11, no. 10, article no. 2804, 2022.

A. Sudarsono, S. Huda, N. Fahmi, M. U. H. Al-Rasyid, and P. Kristalina, “Secure Data Exchange in Environmental Health Monitoring System through Wireless Sensor Network,” International Journal of Engineering and Technology Innovation, vol. 6, no. 2, pp. 103-122, 2016.

M. Boukabous and M. Azizi, “Image and Video-Based Crime Prediction Using Object Detection and Deep Learning,” Bulletin of Electrical Engineering and Informatics, vol. 12, no. 3, pp. 1630-1638, 2023.

J. Garcia-Pajuelo and E. Paiva-Peredo, “Comparison and Evaluation of YOLO Models for Vehicle Detection on Bicycle Paths,” IAES International Journal of Artificial Intelligence, vol. 13, no. 3, pp. 3634-3643, 2024.

H. Gao, “A YOLO-Based Violence Detection Method in IoT Surveillance Systems,” International Journal of Advanced Computer Science and Applications, vol. 14, no. 8, pp. 143-149, 2023.

Faith for Animals, https://www.faithforanimals.org.tw/, 2025.

Roboflow, https://roboflow.com/, 2025.

S. S. More, and R. Bansode, “FCN-YOLOS: An Effective Deep-Learning Model for Real-Time Object Detection,” Journal of Field Robotics, vol. 42, no. 8, pp. 4053-4074, 2025.

S. S. More, N. Patil, V. B. Lobo, N. Shet, D. Goswami, and P. Rane, “Empowering the Visually Impaired: YOLOv8-Based Object Detection in Android Applications,” Procedia Computer Science, vol. 252, pp. 457-469, 2025.

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 Zhi-Yu Wang, Yung-Hoh Sheu, Bo-Kai Yang, Chi-Jen Chen, Chi-Wen Chen

This work is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License.

Copyright Notice

Submission of a manuscript implies: that the work described has not been published before that it is not under consideration for publication elsewhere; that if and when the manuscript is accepted for publication. Authors can retain copyright in their articles with no restrictions. Also, author can post the final, peer-reviewed manuscript version (postprint) to any repository or website.

Since Jan. 01, 2019, IJETI will publish new articles with Creative Commons Attribution Non-Commercial License, under Creative Commons Attribution Non-Commercial 4.0 International (CC BY-NC 4.0) License.

The Creative Commons Attribution Non-Commercial (CC-BY-NC) License permits use, distribution and reproduction in any medium, provided the original work is properly cited and is not used for commercial purposes.

.jpg)