Using Deep Learning Technology to Realize the Automatic Control Program of Robot Arm Based on Hand Gesture Recognition

DOI:

https://doi.org/10.46604/ijeti.2021.7342Keywords:

deep learning, hand gesture recognition, human robot interaction, YOLOAbstract

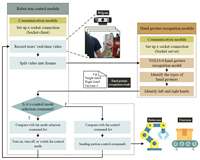

In this study, the robot arm control, computer vision, and deep learning technologies are combined to realize an automatic control program. There are three functional modules in this program, i.e., the hand gesture recognition module, the robot arm control module, and the communication module. The hand gesture recognition module records the user’s hand gesture images to recognize the gestures’ features using the YOLOv4 algorithm. The recognition results are transmitted to the robot arm control module by the communication module. Finally, the received hand gesture commands are analyzed and executed by the robot arm control module. With the proposed program, engineers can interact with the robot arm through hand gestures, teach the robot arm to record the trajectory by simple hand movements, and call different scripts to satisfy robot motion requirements in the actual production environment.

References

I. E. Makrini, S. A. Elprama, J. V. D. Bergh, B. Vanderborght, A. J. Knevels, C. I. C. Jewell, et al., “Working with Walt: How a Cobot Was Developed and Inserted on an Auto Assembly Line,” IEEE Robotics and Automation Magazine, vol. 25, no. 2, pp. 51-58, May 2018.

Y. R. Oh, J. S. Yoon, J. H. Park, M. Kim, and H. K. Kim, “A Name Recognition Based Call-and-Come Service for Home Robots,” IEEE Transactions on Consumer Electronics, vol. 54, no. 2, pp. 247-253, July 2008.

M. A. Rahim and J. Shin, “Hand Movement Activity-Based Character Input System on a Virtual Keyboard,” Electronics, vol. 9, no. 5, 774, May 2020.

R. Mardiyanto, M. F. R. Utomo, D. Purwanto, and H. Suryoatmojo, “Development of Hand Gesture Recognition Sensor Based on Accelerometer and Gyroscope for Controlling Arm of Underwater Remotely Operated Robot,” International Seminar on Intelligent Technology and Its Applications, August 2017, pp. 329-333.

P. P. Sarker, F. Abedin, and F. N. Shimim, “R3Arm: Gesture Controlled Robotic Arm for Remote Rescue Operation,” IEEE Region 10 Humanitarian Technology Conference, December 2017, pp. 428-431.

R. Kaluri and P. R. CH, “Optimized Feature Extraction for Precise Sign Gesture Recognition Using Self-Improved Genetic Algorithm,” International Journal of Engineering and Technology Innovation, vol. 8, no. 1, pp. 25-37, January 2018.

A. Bochkovskiy, C. Y. Wang, and H. Y. M. Liao, “YOLOv4: Optimal Speed and Accuracy of Object Detection,” https://arxiv.org/abs/2004.10934, April 23, 2020.

R. Laroca, L. A. Zanlorensi, G. R. Gonçalves, E. Todt, W. R. Schwartz, and D. Menotti, “An Efficient and Layout-Independent Automatic License Plate Recognition System Based on the YOLO Detector,” https://arxiv.org/abs/1909.01754, September 04, 2019.

L. Aziz, S. B. H. Salam, and S. Ayub, “Exploring Deep Learning-Based Architecture, Strategies, Applications and Current Trends in Generic Object Detection: A Comprehensive Review,” IEEE Access, vol. 8, pp. 170461-170495, September 2020.

M. A. Al-qaness, A. A. Abbasi, H. Fan, R. A. Ibrahim, S. H. Alsamhi, and A. Hawbani, “An Improved YOLO-Based Road Traffic Monitoring System,” Computing, vol. 103, no. 2, pp. 211-230, February 2021.

K. Khazukov, V. Shepelev, T. Karpeta, S. Shabiev, I. Slobodin, I. Charbadze, et al., “Real-Time Monitoring of Traffic Parameters,” Journal of Big Data, vol. 7, no. 1, pp. 1-20, October 2020.

N. Zaghari, M. Fathy, S. M. Jameii, M. Sabokrou, and M. Shahverdy, “Improving the Learning of Self-Driving Vehicles Based on Real Driving Behavior Using Deep Neural Network Techniques,” The Journal of Supercomputing, vol. 77, no. 4, pp. 3752-3794, August 2020.

F. Fernandez, A. Sanchez, J. F. Velez, and B. Moreno, “Associated Reality: A Cognitive Human-Machine Layer for Autonomous Driving,” Robotics and Autonomous Systems, vol. 133, 103624, November 2020.

D. He, K. Xu, and P. Zhou, “Defect Detection of Hot Rolled Steels with a New Object Detection Framework Called Classification Priority Network,” Computers and Industrial Engineering, vol. 128, pp. 290-297, February 2019.

J. Jing, D. Zhuo, H. Zhang, Y. Liang, and M. Zheng, “Fabric Defect Detection Using the Improved YOLOv3 Model,” Journal of Engineered Fibers and Fabrics, in press.

F. J. P. Montalbo, “A Computer-Aided Diagnosis of Brain Tumors Using a Fine-Tuned YOLO-Based Model with Transfer Learning,” KSII Transactions on Internet and Information Systems, vol. 14, no. 12, pp. 4816-4834, December 2020.

M. Yasen and S. Jusoh, “A Systematic Review on Hand Gesture Recognition Techniques, Challenges and Applications,” PeerJ Computer Science, vol. 5, e218, September 2019.

Y. Zhu, S. Jiang, and P. B. Shull, “Wrist-Worn Hand Gesture Recognition Based on Barometric Pressure Sensing,” IEEE 15th International Conference on Wearable and Implantable Body Sensor Networks, April 2018, pp. 181-184.

J. O. Pinzón-Arenas, R. Jiménez-Moreno, and J. E. Herrera-Benavides, “Convolutional Neural Network for Hand Gesture Recognition Using 8 Different Emg Signals,” XXII Symposium on Image, Signal Processing, and Artificial Vision, April 2019, pp. 1-5.

E. A. Chung and M. E. Benalcázar, “Real-Time Hand Gesture Recognition Model Using Deep Learning Techniques and EMG Signals,” 27th European Signal Processing Conference, September 2019, pp. 1-5.

K. Luan and T. Matsumaru, “Dynamic Hand Gesture Recognition for Robot Arm Teaching Based on Improved LRCN Model,” IEEE International Conference on Robotics and Biomimetics, December 2019, pp. 1269-1274.

Published

How to Cite

Issue

Section

License

Copyright Notice

Submission of a manuscript implies: that the work described has not been published before that it is not under consideration for publication elsewhere; that if and when the manuscript is accepted for publication. Authors can retain copyright in their articles with no restrictions. Also, author can post the final, peer-reviewed manuscript version (postprint) to any repository or website.

Since Jan. 01, 2019, IJETI will publish new articles with Creative Commons Attribution Non-Commercial License, under Creative Commons Attribution Non-Commercial 4.0 International (CC BY-NC 4.0) License.

The Creative Commons Attribution Non-Commercial (CC-BY-NC) License permits use, distribution and reproduction in any medium, provided the original work is properly cited and is not used for commercial purposes.

.jpg)