Enhancing Visual SLAM Robustness in Dynamic Scenes with YOLOv5-Assisted ORB-SLAM3

DOI:

https://doi.org/10.46604/peti.2026.15235Keywords:

ORB-SLAM3, YOLOv5, dynamic environments, pose estimation, visual SLAMAbstract

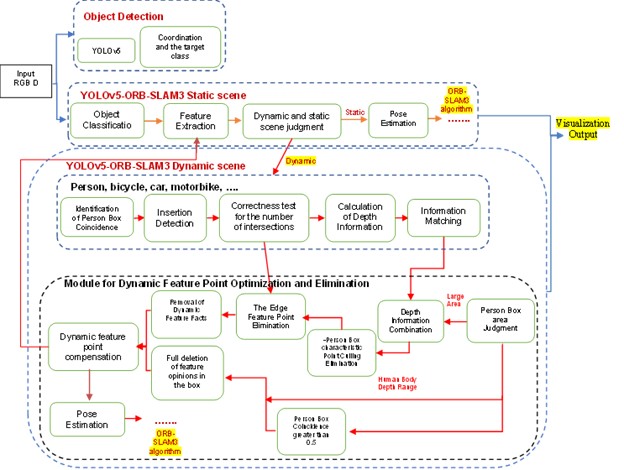

This study presents an enhanced visual SLAM (Simultaneous Localization and Mapping) framework that integrates ORB-SLAM3 with the YOLOv5 real-time object detection model to improve pose accuracy in dynamic environments. Although ORB-SLAM3 achieves robust performance in static scenes, its reliance on ORB feature tracking often degrades accuracy in the presence of moving objects. To overcome this limitation, YOLOv5 is employed to identify dynamic regions in each video frame, enabling the system to remove motion-related feature points before matching. This filtering mechanism reduces the influence of dynamic objects on trajectory estimation and enhances overall system robustness. The proposed method was evaluated using dynamic datasets, including BONN and TUM RGB-D, and further validated through real-world experiments with an Intel RealSense D435i camera. Experimental results demonstrate substantial improvements in pose accuracy compared with the baseline ORB-SLAM3 and the RTAB-Map system, confirming the effectiveness of the YOLOv5-assisted ORB-SLAM3 integration in dynamic scenes.

References

A. Barrau and S. Bonnabel, “The Invariant Extended Kalman Filter as a Stable Observer,” IEEE Transactions on Automatic Control, vol. 62, no. 4, pp. 1797-1812, 2017.

S. Thrun, M. Montemerlo, D. Koller, B. Wegbreit, J. Nieto, and E. Nebot, “FastSLAM: An Efficient Solution to the Simultaneous Localization and Mapping Problem with Unknown Data,” Journal of Machine Learning Research, vol. 4, no. 3, pp. 1-44, 2004.

S. Thrun and M. Montemerlo, “The GraphSLAM Algorithm with Applications to Large-Scale Mapping of Urban Structures,” International Journal of Robotics Research, vol. 25, no. 5-6, pp. 403-429, 2006.

L. Chen, G. Li, W. Xie, J. Tan, Y. Li, J. Pu, et al., “A Survey of Computer Vision Detection, Visual SLAM Algorithms, and their Applications in Energy-Efficient Autonomous Systems,” Energies, vol. 17, no. 20, article no. 5177, 2024.

X. Zhang, H. Dong, H. Zhang, X. Zhu, S. Li, and B. Deng, “A Real-time, Robust, and Versatile Visual-SLAM Framework Based on Deep Learning Networks,” IEEE Transactions on Instrumentation and Measurement, vol. 74, pp. 1-13, 2025.

S. Song, H. Lim, A. J. Lee, and H. Myung, “DynaVINS: A Visual-Inertial SLAM for Dynamic Environments,” IEEE Robotics and Automation Letters, vol. 7, no. 4, pp. 11523-11530, 2022.

P. Cong, J. Liu, J. Li, Y. Xiao, X. Chen, X. Feng, et al., “YDD-SLAM: Indoor Dynamic Visual SLAM Fusing YOLOv5 with Depth Information,” Sensors, vol. 23, no. 23, article no. 9592, 2023.

J. Li and J. Luo, “YS-SLAM: YOLACT++ Based Semantic Visual SLAM for Autonomous Adaptation to Dynamic Environments of Mobile Robots,” Complex & Intelligent Systems, vol. 10, no. 4, pp. 5771-5792, 2024.

M. Chen, H. Guo, R. Qian, G. Gong, and H. Cheng, “Visual Simultaneous Localization and Mapping (vSLAM) Algorithm Based on Improved Vision Transformer Semantic Segmentation in Dynamic Scenes,” Mechanical Sciences, vol. 15, no. 1, pp. 1-16, 2024.

C. Xu, E. Bonetto, and A. Ahmad, “DynaPix SLAM: A Pixel-Based Dynamic Visual SLAM Approach,” Proceedings of the 46th DAGM German Conference on Pattern Recognition (DAGM GCPR 2024), Part II, Springer-Verlag, pp. 168-184, 2023.

A. Eslamian and M. R. Ahmadzadeh, “Det-SLAM: A Semantic Visual SLAM for Highly Dynamic Scenes using Detectron2,” Proceedings of the 8th International Iranian Conference on Signal Processing and Intelligent Systems (ICSPIS), IEEE Press, pp. 1-5, 2022.

M. Labbé and F. Michaud, “RTAB-Map as an Open-Source Lidar and Visual Simultaneous Localization and Mapping Library for Large-Scale and Long-Term Online Operation,” Journal of Field Robotics, vol. 36, no. 2, pp. 416-446, 2019.

F. Endres, J. Hess, N. Engelhard, J. Sturm, D. Cremers, and W. Burgard, “An Evaluation of the RGB-D SLAM System,” Proceedings of the IEEE International Conference on Robotics and Automation, Saint Paul, Minnesota, USA, pp. 1691-1696, 2012.

E. Palazzolo, J. Behley, P. Lottes, P. Giguère, and C. Stachniss, “ReFusion: 3D Reconstruction in Dynamic Environments for RGB-D Cameras Exploiting Residuals,” Proceedings of the IEEE International Conference on Intelligent Robots and Systems (IROS), Macau, China, pp. 7855-7862, 2019.

R. Mur-Artal and J. D. Tardós, “ORB-SLAM2: An Open-Source SLAM System for Monocular, Stereo, and RGB-D Cameras,” IEEE Transactions on Robotics, vol. 33, no. 5, pp. 1255-1262, 2017.

B. Bescos, J. M. Fácil, J. Civera, and J. Neira, “DynaSLAM: Tracking, Mapping, and Inpainting in Dynamic Scenes,” IEEE Robotics and Automation Letters, vol. 3, no. 4, pp. 4076-4083, 2018.

R. Mur-Artal, J. M. M. Montiel, and J. D. Tardós, “ORB-SLAM: A Versatile and Accurate Monocular SLAM System,” IEEE Transactions on Robotics, vol. 31, no. 5, pp. 1147-1163, 2015.

P. Cong, J. Li, J. Liu, Y. Xiao, and X. Zhang, “SEG-SLAM: Dynamic Indoor RGB-D Visual SLAM Integrating Geometric and YOLOv5-Based Semantic Information,” Sensors, vol. 24, no. 7, article no. 2102, 2024.

D. Feng, Z. Yin, X. Wang, F. Zhang, and Z. Wang, “YLS-SLAM: A Real-time Dynamic Visual SLAM based on Semantic Segmentation,” Industrial Robot: The International Journal of Robotics Research and Application, vol. 52, no. 1, pp. 106-115, 2024.

J. Sturm, N. Engelhard, F. Endres, W. Burgard, and D. Cremers, “A benchmark for the Evaluation of RGB-D SLAM Systems,” Proceedings of the IEEE International Conference on Intelligent Robots and Systems, Vilamoura-Algarve, Portugal, pp. 573-580, 2012.

C. Campos, R. Elvira, J. J. G. Rodriguez, J. M. M. Montiel, and J. D. Tardós, “ORB-SLAM3: An Accurate Open-Source Library for Visual, Visual–Inertial, and Multimap SLAM,” IEEE Transactions on Robotics, vol. 37, no. 6, pp. 1874-1890, 2021.

Ultralytics, "YOLOv5: in PyTorch > ONNX > CoreML > TFLite," https://github.com/ultralytics/yolov5, accessed in 2025.

T. Y. Lin, M. Maire, S. Belongie, J. Hays, P. Perona, D. Ramanan, et al., “Microsoft COCO: Common Objects in Context,” Lecture Notes in Computer Science, vol. 8693, pp. 740-755, 2014.

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 Rajaa Wejood Ali, Heba Hakim, Dr. Mohammed Abd Ali Al-Ibadi

This work is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License.

Submission of a manuscript implies: that the work described has not been published before that it is not under consideration for publication elsewhere; that if and when the manuscript is accepted for publication. Authors can retain copyright of their article with no restrictions. Also, author can post the final, peer-reviewed manuscript version (postprint) to any repository or website.

Since Oct. 01, 2015, PETI will publish new articles with Creative Commons Attribution Non-Commercial License, under The Creative Commons Attribution Non-Commercial 4.0 International (CC BY-NC 4.0) License.

The Creative Commons Attribution Non-Commercial (CC-BY-NC) License permits use, distribution and reproduction in any medium, provided the original work is properly cited and is not used for commercial purposes