Improving Cardiac Computed Tomography Scan Segmentation Using a U-Net Model with Continual Learning Techniques

DOI:

https://doi.org/10.46604/peti.2026.15705Keywords:

medical image segmentation, CT scan segmentation, U-Net model, continual learningAbstract

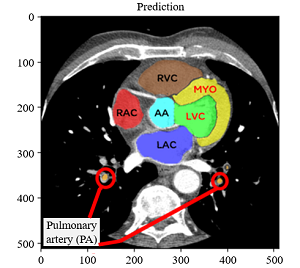

Accurate segmentation of cardiac structures in computed tomography (CT) scans is challenging due to the proximity and similar intensity of adjacent organs. This study introduces an enhanced U-Net-based approach incorporating continual learning, class merging, and separation strategies to improve cardiac CT segmentation. Anatomically related structures are first merged and later separated through class-specific heads, reducing boundary misclassification. Furthermore, pixel adjacency is employed to improve the delineation of complex cardiac regions. The proposed method is evaluated on the MM-WHS 2017 dataset, focusing on seven components: left ventricular cavity (LVC), right ventricular cavity (RVC), left atrium cavity (LAC), right atrium cavity (RAC), myocardium (MYO), ascending aorta (AA), and pulmonary artery (PA). Experimental results show that the proposed model achieves a dice score coefficient (DSC) of 94.08% and an intersection over union (IoU) of 92.03%, outperforming baseline U-Net models. These findings demonstrate the effectiveness of structure-aware learning in advancing cardiac CT segmentation.

References

B. Sahiner, A. Pezeshk, L. M. Hadjiiski, X. Wang, K. Drukker, K. H. Cha, et al., “Deep Learning in Medical Imaging and Radiation Therapy,” Medical Physics, vol. 46, no. 1, pp. e1-e36, 2019.

L. C. Chen, G. Papandreou, I. Kokkinos, K. Murphy, and A. L. Yuille, “DeepLab: Semantic Image Segmentation with Deep Convolutional Nets, Atrous Convolution, and Fully Connected CRFs,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 40, no. 4, pp. 834-848, 2018.

X. Chen, J. K. Udupa, U. Bagci, Y. Zhuge, and J. Yao, “Medical Image Segmentation by Combining Graph Cuts and Oriented Active Appearance Models,” IEEE Transactions on Image Processing, vol. 21, no. 4, pp. 2035-2046, 2012.

O. Ronneberger, P. Fischer, and T. Brox, “U-Net: Convolutional Networks for Biomedical Image Segmentation,” Medical Image Computing and Computer-Assisted Intervention, pp. 234-241, 2015.

Z. Zhou, M. M. R. Siddiquee, N. Tajbakhsh, and J. Liang, “UNet++: A Nested U-Net Architecture for Medical Image Segmentation,” Deep Learning in Medical Image Analysis and Multimodal Learning for Clinical Decision Support, pp. 3-11, 2018.

“MM-WHS: Multi-Modality Whole Heart Segmentation,” https://zmiclab.github.io/zxh/0/mmwhs/, 2025

M. E. Rayed, S. M. S. Islam, S. I. Niha, J. R. Jim, M. M. Kabir, and M. F. Mridha, “Deep Learning for Medical Image Segmentation: State-of-the-Art Advancements and Challenges,” Informatics in Medicine Unlocked, vol. 47, article no. 101504, 2024

B. Woo and M. Lee, “Comparison of Tissue Segmentation Performance between 2D U-Net and 3D U-Net on Brain MR Images,” International Conference on Electronics, Information, and Communication, pp. 1-4, 2021.

H. Lee, P. Puttimit, W. Thongsopa, S. Roh, Y. R. Lee, S. Y. Park, et al., “Refining Class Confusion with Pixel Adjacency and Continual Learning in Medical Image Segmentation,” Proceedings of the 7th International Conference on Culture Technology, pp. 86-92, 2024.

G. Arya, M. K. Hasan, A. Bagwari, N. Safie, S. Islam, F. R. A. Ahmed, et al., “Multimodal Hate Speech Detection in Memes Using Contrastive Language-Image Pre-Training,” IEEE Access, vol. 12, pp. 22359-22375, 2024.

D. Chen, Z. Wu, F. Liu, Z. Yang, S. Zheng, Y. Tan, et al., “ProtoCLIP: Prototypical Contrastive Language Image Pretraining,” IEEE Transactions on Neural Networks and Learning Systems, vol. 36, no. 1, pp. 610-624, 2025.

B. Liu, “Research on Image Classification and Retrieval Based on Contrastive Language-Image Pre-Training,” 4th Asia-Pacific Conference on Communications Technology and Computer Science, pp. 418-423, 2024.

S. Kütük, T. Çağlıkantar, and D. Sarıkaya, “Generating Automatic Surgical Captions Using a Contrastive Language-Image Pre-Training Model for Nephrectomy Surgery Images,” 32nd Signal Processing and Communications Applications Conference, pp. 1-4, 2024.

H. Q. Vu, B. Song, G. Li, and R. Law, “Exploring Emotional Aspects of Travel Concepts via Travel Photos Based on Contrastive Language-Image Pretraining,” Tourism Management, vol. 108, article no. 105117, 2025.

J. Xu, J. Ma, X. Gao, and Z. Zhu, “Adaptive Progressive Continual Learning,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 44, no. 10, pp. 6715-6728, 2022.

R. Gao and W. Liu, “Red Alarm: Controllable Backdoor Attack in Continual Learning,” Neural Networks, vol. 188, article no. 107479, 2025.

Neeraj and P. Nandal, “Continual Learning Techniques to Reduce Forgetting: A Comparative Study,” 2nd International Conference on Computational Intelligence, Communication Technology and Networking, pp. 210-215, 2025.

B. Kann, S. Castellanos-Paez, and P. Lalanda, “Evaluation of Regularization-based Continual Learning Approaches: Application to HAR,” IEEE International Conference on Pervasive Computing and Communications Workshops and other Affiliated Events (PerCom Workshops), pp. 460-465, 2023.

K. Hong, H. Jin, S. Suh, and E. Kim, “Exploration and Exploitation in Continual Learning,” Neural Networks, vol. 188, article no. 107444, 2025.

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 Wanida Khamprapai, Wassaphas Thongsopa, Chayanon Deejaiwong, Jirawan Charoensuk, Seksan Mathulaprangsan, Chalothon Chootong

This work is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License.

Submission of a manuscript implies: that the work described has not been published before that it is not under consideration for publication elsewhere; that if and when the manuscript is accepted for publication. Authors can retain copyright of their article with no restrictions. Also, author can post the final, peer-reviewed manuscript version (postprint) to any repository or website.

Since Oct. 01, 2015, PETI will publish new articles with Creative Commons Attribution Non-Commercial License, under The Creative Commons Attribution Non-Commercial 4.0 International (CC BY-NC 4.0) License.

The Creative Commons Attribution Non-Commercial (CC-BY-NC) License permits use, distribution and reproduction in any medium, provided the original work is properly cited and is not used for commercial purposes