Motorcycle Parking Violation Detection Using YOLOv12 Segmentation and ROI-Guided Orientation Analysis

DOI:

https://doi.org/10.46604/peti.2026.16020Keywords:

parking violation detection, YOLOv12, image segmentation, orientation analysis, region of interestAbstract

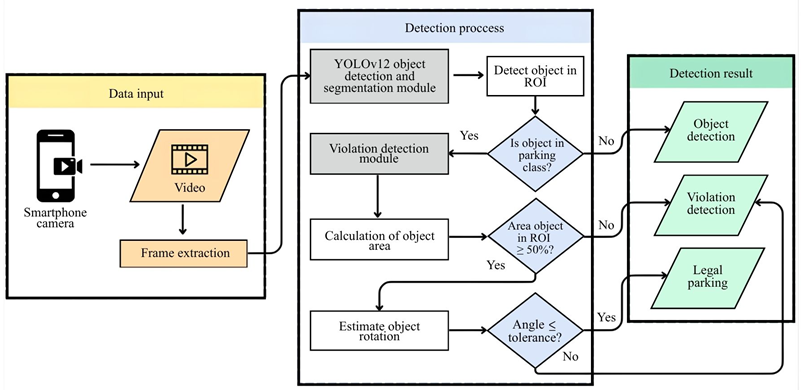

This study aims to improve the accuracy of legal and illegal motorcycle parking classification by proposing a computer vision-based detection system using YOLOv12 segmentation and region-of-interest (ROI)-guided orientation analysis. The proposed system integrates object segmentation, ROI mapping, and computation of the angular deviation between vehicle orientation and parking guides. To determine the optimal orientation tolerance, experiments are conducted using multiple angular thresholds under identical datasets and testing scenarios. The system is evaluated across three locations using confusion-matrix-based performance metrics. The results show that a 30° tolerance yields the best performance, achieving an average accuracy of 87.29%, precision of 93.14%, recall of 92.23%, and F1-score of 92.68%. These findings indicate that ROI-guided orientation analysis enhances the reliability of motorcycle parking violation detection.

References

H. Makmur, M. Wulandari, M. F. B, A. B. Kaswar, D. D. Andayani, F. Adiba, et al., “Motorcycle Parking Violation Detection System Using YOLOv7 with Region of Interest Mapping and Object Area Calculation,” Advances in Technology Innovation, vol. 10, no. 1, pp. 29-43, 2025.

Badan Pusat Statistik, Statistical Yearbook of Indonesia 2024, Jakarta, Indonesia: BPS-Statistics Indonesia, 2024.

R. S. Charran and R. K. Dubey, “Two-Wheeler Vehicle Traffic Violations Detection and Automated Ticketing for Indian Road Scenario,” IEEE Transactions on Intelligent Transportation Systems, vol. 23, no. 11, pp. 22002-22007, 2022.

S. S. Kathait, A. Kumar, S. Sawal, R. Patidar, and K. Agrawal, “Computer Vision and Deep Learning Based Approach for Violations due to Illegal Parking Detection,” International Journal of Computer Applications, vol. 186, no. 70, pp. 9-13, 2025.

B. Mahaur, N. Singh, and K. K. Mishra, “Road Object Detection: A Comparative Study of Deep Learning-Based Algorithms,” Multimedia Tools and Applications, vol. 81, no. 10, pp. 14247-14282, 2022.

Y. Zhang, Z. Guo, J. Wu, Y. Tian, H. Tang, and X. Guo, “Real-Time Vehicle Detection Based on Improved YOLO v5,” Sustainability, vol. 14, no. 19, article no. 12274, 2022.

E. Güney and C. Bayılmış, “An Implementation of Traffic Signs and Road Objects Detection Using Faster R-CNN,” Sakarya University Journal of Computer and Information Sciences, vol. 5, no. 2, pp. 216-224, 2022.

M. Zhou, X. Wan, Y. Yang, J. Zhang, S. Li, S. Zhou, et al., “EBR-YOLO: A Lightweight Detection Method for Non-Motorized Vehicles Based on Drone Aerial Images,” Sensors, vol. 25, no. 1, article no. 196, 2025.

J. Wei, A. As’arry, K. A. M. Rezali, M. Z. M. Yusoff, H. Ma, and K. Zhang, “A Review of YOLO Algorithm and Its Applications in Autonomous Driving Object Detection,” IEEE Access, vol. 13, pp. 93688-93711, 2025.

M. Bakirci, “Utilizing YOLOv8 for Enhanced Traffic Monitoring in Intelligent Transportation Systems (ITS) Applications,” Digital Signal Processing, vol. 152, article no. 104594, 2024.

R. Sapkota, M. Flores-Calero, R. Qureshi, C. Badgujar, U. Nepal, A. Poulose, et al., “YOLO Advances to Its Genesis: A Decadal and Comprehensive Review of the You Only Look Once (YOLO) Series,” Artificial Intelligence Review, vol. 58, no. 9, article no. 274, 2025.

A. Ahad and F. A. Kidwai, “YOLO Based Approach for Real-Time Parking Detection and Dynamic Allocation: Integrating Behavioral Data for Urban Congested Cities,” Innovative Infrastructure Solutions, vol. 10, no. 6, article no. 252, 2025.

D. P. Carrasco, H. A. Rashwan, M. Á. García, and D. Puig, “T-YOLO: Tiny Vehicle Detection Based on YOLO and Multi-Scale Convolutional Neural Networks,” IEEE Access, vol. 11, pp. 22430-22440, 2021.

S. Bose, M. H. Kolekar, S. Nawale, and D. Khut, “LoLTV: A Low Light Two-Wheeler Violation Dataset with Anomaly Detection Technique,” IEEE Access, vol. 11, pp. 124951-124961, 2023.

A. Ahad and F. A. Kidwai, “Navigating Parking Choices: Drivers’ Perspectives on Parking Guidance and Information Systems: A Survey-Based Case Study,” Innovative Infrastructure Solutions, vol. 10, no. 2, article no. 66, 2025.

S. S. Kathait, A. Kumar, S. Sawal, R. Patidar, and K. Agrawal, “Computer Vision and Deep Learning Based Approach for Traffic Violations Due to Over-Speeding and Wrong Direction Detection,” International Journal of Computer Applications, vol. 186, no. 66, pp. 7-13, 2025.

N. Sharma, S. Baral, M. P. Paing, and R. Chawuthai, “Parking Time Violation Tracking Using YOLOv8 and Tracking Algorithms,” Sensors, vol. 23, no. 13, article no. 5843, 2023.

F. M. Alwafi, B. M. Pratama, P. T. Le, B. Prihasto, and J. C. Wang, “Enhanced Detection of Illegally Parked Vehicles Using YOLO and Good Feature to Track Methods,” 2024 Asia Pacific Signal and Information Processing Association Annual Summit and Conference (APSIPA ASC), Macau, Macao, 2024.

I. Mir, and K. J. Giri, “SO-YOLOv8: A Novel Deep Learning-Based Approach for Small Object Detection with YOLO beyond COCO,” Expert Systems with Applications, vol. 280, article no. 127447, 2025.

X. Luo and X. Zhu, “YOLO-SMUG: An Efficient and Lightweight Infrared Object Detection Model for Unmanned Aerial Vehicles,” Drones, vol. 9, no. 4, article no. 245, 2025.

Y. Hou, G. Shi, Y. Zhao, F. Wang, X. Jiang, R. Zhuang, et al., “R-YOLO: A YOLO-Based Method for Arbitrary-Oriented Target Detection in High-Resolution Remote Sensing Images,” Sensors, vol. 22, no. 15, article no. 5716, 2022.

H. Wang and H. Qian, “SDG-YOLOv8: Single-Domain Generalized Object Detection Based on Domain Diversity in Traffic Road Scenes,” Displays, vol. 87, article no. 102948, 2025.

Y. Qiu, Y. Lu, Y. Wang, and H. Jiang, “IDOD-YOLOV7: Image-Dehazing YOLOV7 for Object Detection in Low-Light Foggy Traffic Environments,” Sensors, vol. 23, no. 3, article no. 1347, 2023.

R. Grbić and B. Koch, “Automatic Vision-Based Parking Slot Detection and Occupancy Classification,” Expert Systems with Applications, vol. 225, article no. 120147, 2023.

S. E. Ghazouali, Y. Mhirit, A. Oukhrid, U. Michelucci, and H. Nouira, “FusionVision: A Comprehensive Approach of 3D Object Reconstruction and Segmentation from RGB-D Cameras Using YOLO and Fast Segment Anything,” Sensors, vol. 24, no. 9, article no. 2889, 2024.

I. E. Tampu, A. Eklund, and N. Haj-Hosseini, “Inflation of Test Accuracy Due to Data Leakage in Deep Learning-Based Classification of OCT Images,” Scientific Data, vol. 9, no. 1, article no. 580, 2022.

D. Kuo, Q. Gao, D. Patel, M. Pajic, and M. Hadziahmetovic, “How Foundational Is the Retina Foundation Model? Estimating RETFound’s Label Efficiency on Binary Classification of Normal versus Abnormal OCT Images,” Ophthalmology Science, vol. 5, no. 3, article no. 100707, 2025.

G. Pradhan, M. R. Prusty, V. S. Negi, and S. Chinara, “Advanced IoT-Integrated Parking Systems with Automated License Plate Recognition and Payment Management,” Scientific Reports, vol. 15, no. 1, article no. 2388, 2025.

İ. Çetinkaya, E. D. Çatmabacak, and E. Öztürk, “Detection of Fractured Endodontic Instruments in Periapical Radiographs: A Comparative Study of YOLOv8 and Mask R-CNN,” Diagnostics, vol. 15, no. 6, article no. 653, 2025.

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 Muh Fajrin Bakri, Shahnaz Tasha Kurnia, Muhammad Fajar B, Andi Baso Kaswar, Dyah Darma Andayani, Fhatiah Adiba, Sanatang, Syahrul

This work is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License.

Submission of a manuscript implies: that the work described has not been published before that it is not under consideration for publication elsewhere; that if and when the manuscript is accepted for publication. Authors can retain copyright of their article with no restrictions. Also, author can post the final, peer-reviewed manuscript version (postprint) to any repository or website.

Since Oct. 01, 2015, PETI will publish new articles with Creative Commons Attribution Non-Commercial License, under The Creative Commons Attribution Non-Commercial 4.0 International (CC BY-NC 4.0) License.

The Creative Commons Attribution Non-Commercial (CC-BY-NC) License permits use, distribution and reproduction in any medium, provided the original work is properly cited and is not used for commercial purposes